- Where does your data come from?

-

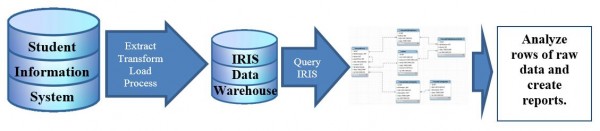

While some may think that data is magically delivered by the data stork, data fairies or data gnomes, the real answer is much less entertaining. The district office maintains a data warehouse (called the Institutional Research Information System or IRIS) populated from the Student Information System (SIS). OIE staff use a sequel-language browser and knowledge of relationship databases to create queries that extract data from IRIS. This allows us the flexibility to create custom reports with a wide range of data that systems like BOEXI cannot provide.

Data is extracted from SIS, transformed into usable values, and loaded in IRIS periodically throughout the year in a process called an ETL. This ETL occurs weekly for most student enrollment data and during set reporting snapshots (such as at 45th day or the end of term) for other data.

- Why doesn’t your data update every day?

-

Institutional research offices like OIE were historically created to handle retrospective reporting on student and college data. In other words, how can data from past semesters help us make better decisions moving forward? However, current trends in business intelligence and student analytics are moving towards the use of real-time data to create more instant process interventions. Tableau Analytics are underway to help make on-demand data and dashboards a reality at MCC.

- How long will it take to get my data?

-

The further you can plan ahead, the more likely we will be able to meet your needs. For most standard data requests, we ask for at least a two week lead time. More complicated requests may take longer.

One factor in request turnaround time is complexity. Some data is fairly easy for us to obtain. Many queries have already been created and can be easily refreshed with new data. However, custom data requests often require the creation of new queries or the modification of previously developed queries. This query building process can take time, especially for large or complicated data sets. We work hard to validate and double check data to avoid errors. We rarely use “click and run” reports from BOEXI because they seldom provide the data we need.

In addition to the sometimes lengthy query creation process, OIE staff also handles numerous other responsibilities that may delay custom reporting, including annual reports, committee service, planning, accreditation, survey administration, program review, predictive analytics, grants and much more.

- I’m seeing slightly different numbers for the same measure/metric. Why are the numbers not the same?

-

We are occasionally approached with questions on why data for the same/similar measure is different between reports, and, sometimes, this causes stakeholders to question the numbers they are seeing. Almost always, we are able to explain differences between reports in the two ways outlined below. When trying to compare data, it is important to take into account the data source, the point in time the data was collected and the parameters used to extract the data.

- Differences may come from comparing reports that were developed using different sets of parameters or different points in time. Sometimes, reports get updated throughout the semester at different reporting snapshots (e.g. beginning of term, 45th Day, end of term, or by week), so the data from a Fall 2014 beginning of term report will likely be different than the data from a Fall 2014 45th Day report. Sometimes, different stakeholders will request slightly different parameters in their reports that may cause differences in reporting, such as including/excluding dual enrollment courses.

- Differences may also stem from reports using different data sources. When using different data sources, such as IRIS, BOEXI, or testing service’s internal database, data will often be similar but not match exactly. This is generally due to how each system captures data and what point in time data is accessed. Some systems only produce “real-time” data while other systems use reporting snapshots that save data form historical points in time.

- What data is available?

-

While we have access to a wide range of data, we do not have access to everything. Below is a sample of items that are available.

Student Data Performance Data Class Data Name*

Contact info (emails, address, phone)*

Basic Demographics (Age, Gender, Ethnicity)*

Citizenship

Visa Type

Tuition County Code

Residency Code

Primary Language*

First Generation Status*

Previous Education*

Current Intent*

New/Former/Continuing Status

High School Grad Status

High School Attended and Grad Year

GI Codes

Hours Worked Per Week*

Academic Load

Primary Time Attending

Veteran Status*

Financial Aid Disbursed and aid type

Student Goals (“majors”)*

Student groups (e.g. Ace, Honors)

Official Transfer-in credits

Terms Attended

HS Dual Students

Attempted/Earned Hours

Term/Cum GPA

Course Retention/Completion (Grades)

Term-term persistence

Degree/Certificate Awards (title, date/term conferred)

Enrollment status (Dropped, Enroll, Withdrawn)

Transfer Data (National Student Clearinghouse)

General data about MCC students attending public state universities

Placement test scores

Basic Class info (prefix, number, title)

Class status (Active, Cancelled)

Location

Instructional Mode

Department

Grading Basis

Day/Eve Code

Course Meeting times and days

Course length (weeks)

HS Dual Courses and HS

Combined section codes

Total enrollment

Class start/end/cancelled dates

Credit Hours

FTSE

Instructor

Class Load

Instructor Type (Res/Adj/Service)

Honors Class

Noncredit classes

*Indicates data is self-reported by students.

Glossary of Frequently Used Terms

Headcount – A count of the unique number of individuals.

Numbers reported as a headcount contain no duplication.

Enrollment – The total number of enrollments generated by students.

A student is duplicated for each class they take.

FTSE (often pronounced “foot-see”) stands for Full-time Student Equivalents.

The district and state both use FTSE to determine funding because it takes into account credit hours generated by students rather than a headcount or enrollment numbers that may over count part-time students. While the actual fiscal year FTSE calculation can get complicated (see below), it can be summarized fairly easily:

- FTSE in a term = total credit hours / 15

- FTSE in a year = total credit hours / 30

Example to Illustrate Headcount, Enrollment and FTSE

- Student A is enrolled in three, three credit hour courses for a total of nine credit hours. They generate 1 headcount, 3 enrollments and 0.60 FTSE (9 credits divided by 15).

- Student B is enrolled in three, three credit hour courses and two, four credit hour courses for a total of 17 credit hours. They generate 1 headcount, 5 enrollments and 1.13 FTSE (17 credits divided by 15).

- Student C is enrolled in one, one credit hour course for a total of one credit hours. They generate 1 headcount, 1 enrollment and 0.07 FTSE (1 credit hour divided by 15).

- Together, these three students generate 3 headcount, 9 enrollments and 1.8 FTSE (27 credit hours divided by 15).

Short-term FTSE is where things get complicated as defined by the state.

For any class that is not in session as of the Fall or Spring Census Date (45th Day), half of FTSE credit is awarded when a student starts a class and half of FTSE is awarded when a student completes a class. This short-term FTSE calculation is used for summer term courses as well as short-term courses in Fall and Spring.

Fiscal-Year FTSE – The official FY FTSE calculation also adds some complication.

Per the Arizona Revised Statutes, FY FTSE is calculated by taking the average of Fall and Spring FTSE and adding short-term FTSE to that. For example, if Fall FTSE was 11,000 and Spring FTSE was 9,000, the average Fall/Spring FTSE would be 10,000. If short-term FTSE for that FY was 2,139, then the total FY FTSE would be 12,139 (10,000 + 2,139).

Fiscal Year – In MCCCD, the fiscal year runs from July 1 to June 30.

For course-based calculations (headcount, enrollment, FTSE, etc), the FY for a course is determined by course start date. For student degree calculations, MCC determines FY by degree conferral date.

Academic Year – The Academic year starts with the Fall semester.

So, the term sequence in any academic year is Fall, Spring, Summer.

Snapshot – These are points in time during the semester when the data in IRIS is frozen and saved for future reporting. These include:

- Beginning of Term (BT) – The Saturday after the first week of classes

- 45th Day (45) – The 45th day of classes, our official census date

- End of Term (ET) – About one month after a term ends

- Weekly (WK) – Every Saturday (This load is limited to student enrolment and course data. Some data, such as degree and certificate awards, are only captured during the end of term snapshot).

Completion – Generally, we use completion to mean course completion.

Completion can be both successful and unsuccessful as grades of A, B, C, D, F, P, Z all count as completed. Completion can also be used more generically when discussing college completion or graduation.

Retention & Persistence– Usually, when the words retention and persistence are used in conversation within Maricopa, the meaning is the number or percent of students who continued their enrollment from one term to the next. For example, a student who is enrolled in Fall 2019 and stays enrolled in Spring 2020 is said to have persisted from Fall to Spring. We can also say they were retained by the college. Retention is typically thought of as an institutional measure (e.g. how many students did a program, college, or district retain) and persistence is often considered to be a student measure (e.g. did the student persist to the next semester).

Retention can also be used at the course level. If a student completes a course, whether successful or not, they are considered retained. Course retention and completion are basically the opposites of withdrawal.

Drop – Drops are sometimes confused with withdrawals, but they are different.

A drop occurs when a student un-enrolls from a course from their class schedule during the approved drop period (usually during the first week of classes or before classes start). A drop is an enrollment status (e.g. “enrolled in a course” or “dropped from a course”) whereas a withdrawal is a course grade. A drop does not appear on student transcripts; a withdrawal grade does.

Withdrawal – Withdrawals are course grades (W or Y).

Withdrawals can be student, faculty or staff initiated based on a wide variety of reasons. Students can withdraw themselves from courses until a certain date in the term; after that date, withdrawals must be approved and/or initiated by faculty or staff. When we look at course retention, we break grades into groups of successful completion (A, B, C, P), unsuccessful completion (D, F, Z) and withdrawal (W, Y).